SLM Swarm Intelligence

SLM(Small Language Model) Swarm Intelligence

What is it?

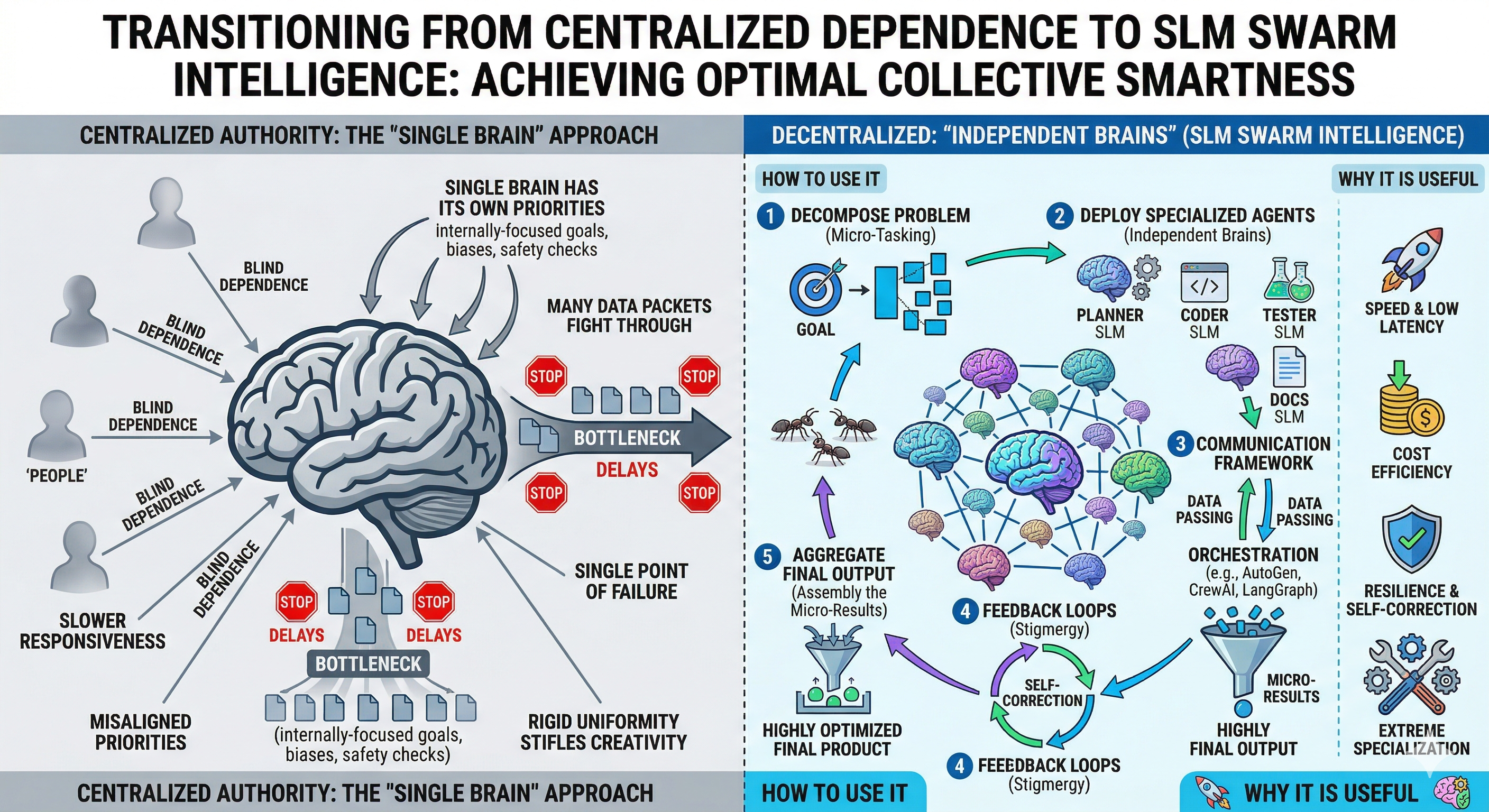

SLM Swarm Intelligence can be understood as an enterprise-level AI design approach where each business unit owns its own intelligence.

Instead of depending on one large, generic AI model for all use cases, organizations can deploy multiple Small Language Models (SLMs), each trained on the specific data, rules, and workflows of individual teams like Finance, HR, Operations, etc.

Each SLM acts as a domain expert within its own boundary handling team-specific decisions and logic. These models are lightweight, focused, and deployed within the team’s infrastructure, ensuring better alignment with business requirements. At an organizational level, these systems can be connected through a coordination layer to solve larger cross-functional problems.

Perspective

This is not about completely removing central systems, but about rebalancing intelligence.

Why is it useful?

This approach reduces dependency on a centralized AI system and aligns intelligence directly with business ownership.

Better alignment with business rules: Each team’s SLM is trained on its own logic, avoiding conflicts that typically arise in generalized models.

Improved speed and performance: Smaller models can run faster and even within local environments, enabling quick decision-making.

Cost optimization: Instead of using a large model for every request, teams use lightweight models tailored to their needs.

Data security and isolation: Sensitive business data remains within the team’s environment, reducing exposure risks.

Higher accuracy in domain tasks: Specialized models perform better for specific use cases compared to a general-purpose system.

In simple terms, every team gets the right intelligence for their job, instead of adjusting to a one-size-fits-all AI.

How to use it?

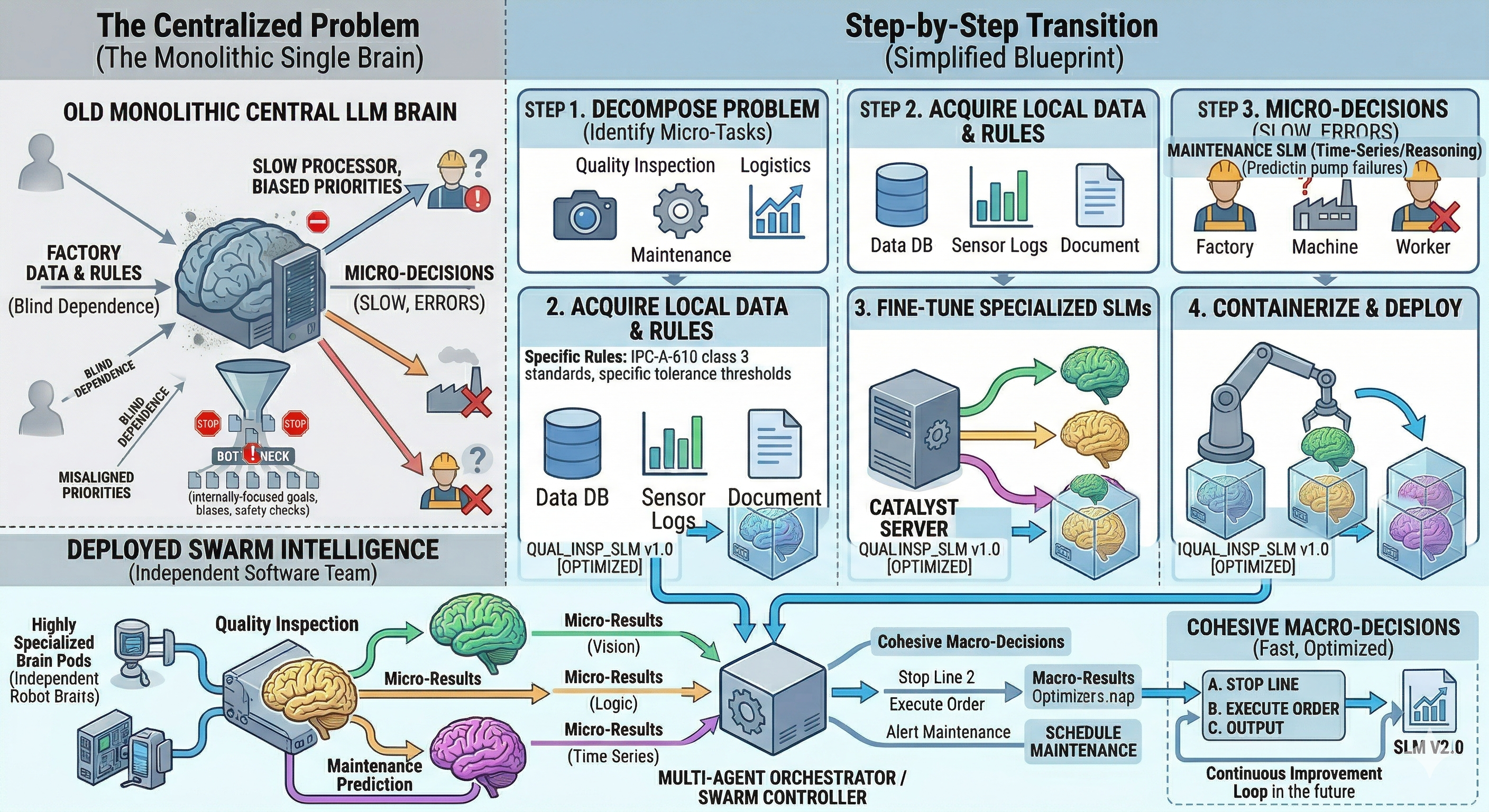

Implementation requires a shift from a centralized AI mindset to a distributed, team-owned model.

Define team-level use cases:

Identify high-frequency, rule-based decisions within each business unit (e.g., validation, classification, approvals).Break down into micro-tasks:

Structure these decisions into smaller, well-defined tasks with clear inputs and outputs.Train specialized SLMs:

Use internal data and business rules to fine-tune small models for each task. Techniques like distillation or few-shot learning can be used.Deploy within team environments:

Each SLM should be hosted close to the team’s systems (applications, APIs, workflows).Set up orchestration for collaboration:

Use frameworks like Microsoft AutoGen, CrewAI, or LangGraph to enable communication between models when cross-team workflows are required.Enable feedback and improvement loops:

Continuously monitor outputs, refine rules, and retrain models to improve performance over time.

Thought process for de-centralizing problem

Thought Process: Decentralizing Intelligence

The goal is to move from centralized intelligence to distributed ownership.

Each team:

Owns its data

Defines its rules

Controls its AI models

At scale, this creates an organization where:

Intelligence is embedded within each function

Decisions are made closer to the source

Systems become more scalable and adaptable

Mitigation: SLM Factory & Standardized Pipelines (How Training Works in Practice)

To effectively manage the complexity introduced by decentralized SLM-based systems, organizations can establish a centralized SLM factory supported by standardized pipelines. This approach not only brings governance and consistency but also enables scalable and repeatable model development across multiple business units.

At a high level, the factory acts as a structured system that transforms business rules and domain data into deployable, specialized models.

The process begins with individual teams defining their business rules, decision logic, and data specifications in a structured format. These are typically well-scoped, high-frequency tasks such as validation, classification, or rule-based decision-making. Clear definition of inputs, outputs, and expected behavior is critical at this stage, as it directly influences model performance.

Once the problem is defined, the next step involves data preparation. Teams collect historical data from their systems, including both successful and failed cases, and convert them into structured input-output pairs. This dataset is then cleaned, standardized, and enriched with edge cases to ensure robustness. In practice, the quality and coverage of this dataset play a more important role than model size itself.

Instead of building models from scratch, organizations maintain a set of pre-trained base models within the factory. These base models already possess general language understanding and serve as the foundation for all downstream specialization. By reusing these models, teams can significantly reduce training time, cost, and infrastructure overhead.

The core step in the pipeline is fine-tuning, where the base model is trained on team-specific data. Through this process, the model learns to apply business rules and generate precise, structured outputs aligned with domain requirements. Depending on the use case, efficient techniques such as parameter-efficient fine-tuning can be used to minimize computational cost while maintaining performance.

Following training, each model undergoes evaluation and validation. This includes testing on unseen data, validating against business rules, and ensuring correct handling of edge cases. Only models that meet predefined quality thresholds are approved for deployment, ensuring reliability in production environments.

Once validated, the SLM is deployed as a service within the team’s infrastructure. Applications can directly interact with this model for decision-making, enabling faster and more context-aware processing compared to centralized systems.

A critical aspect of this architecture is the continuous feedback loop. As business rules evolve and new data becomes available, model outputs are monitored, errors are captured, and datasets are updated. This enables periodic retraining and ensures that each SLM remains aligned with current business requirements.

The SLM factory standardizes all these stages data preparation, training, evaluation, deployment, and versioning into a unified pipeline. It can also maintains a centralized registry of models, ensuring proper version control, traceability, and governance across the organization.

In essence, this approach shifts the focus from ad-hoc model development to a systematic, production-grade pipeline, where business logic is continuously translated into scalable and maintainable AI capabilities.

Example: Two Teams, Two SLMs, One Organization

To better understand how SLM-based distributed intelligence works in practice, consider a simple e-commerce example of two business teams within an organization: the Finance team and the Customer Support team.

The Finance team is responsible for invoice validation and payment processing. Their work depends on strict business rules such as tax compliance, vendor verification, and payment terms. To support this, a specialized Finance SLM cab be trained using internal ERP data, historical invoices, and rule-based validation logic. This model is designed to take structured invoice data as input and return a clear decision such as approved or rejected along with the reason for that decision.

On the other hand, the Customer Support team focuses on handling customer queries and service tickets. Their workflow involves classifying incoming requests, assigning priorities, and ensuring SLA adherence. A Support SLM is trained on historical ticket data, customer interactions, and resolution patterns. This model processes incoming queries and outputs structured classifications such as issue type and priority level.

Individually, both teams benefit significantly from their respective SLMs. The Finance team achieves faster and more accurate invoice validation without manual intervention, while maintaining strict compliance with internal policies. The Support team improves response times and reduces manual effort in ticket triaging, leading to better customer experience.

However, the real value of this approach emerges when these systems work together across teams.

Consider a scenario where a customer raises a billing-related issue. The request is first processed by the Support SLM, which classifies it as a high-priority billing concern. Based on this classification, the system triggers a validation request to the Finance SLM. The Finance SLM analyzes the relevant invoice and identifies a discrepancy, such as an incorrect tax calculation.

This result is then passed back to the Support SLM, which uses the information to generate a clear and context-aware response for the customer. The entire workflow is completed without manual coordination between teams, yet it leverages the deep expertise of both domains.

This example highlights an important shift in system design. Each team operates its own specialized intelligence, optimized for its specific business needs. At the same time, these systems can be connected to handle cross-functional workflows efficiently.

In essence, SLMs enable organizations to move from isolated, team-specific processes to a more integrated and intelligent ecosystem where each unit contributes its expertise while remaining aligned with the broader system.

Advantages (Including Cost Perspective)

When implemented with proper pipelines and governance, this approach delivers strong advantages:

Cost Efficiency: Running smaller, task-specific models significantly reduces compute costs compared to using large models for every request

Optimized Resource Utilization: Base models and shared pipelines prevent duplication of effort across teams

Faster Iteration Cycles: Teams can update only their SLM without impacting the entire system

Scalable Architecture: New business units can onboard quickly using existing factory and standards

Balanced Control: Central governance with decentralized execution ensures both flexibility and consistency.

Drawbacks of Decentralization & Architectural Trade-offs

While adopting an SLM-based distributed intelligence approach helps reduce dependency on a centralized system, it also introduces certain practical challenges.

The primary concern is increased system complexity and higher initial setup effort. Instead of managing a single AI endpoint, engineering teams now need to handle multiple specialized models each requiring its own data preparation, fine-tuning, deployment, and version control.

In addition, building and maintaining the orchestration layer becomes critical. Ensuring that these models communicate reliably, maintain context, and avoid issues like looping workflows or inconsistent outputs requires strong system design and governance.

There is also an ongoing need for:

Monitoring and debugging across multiple models

Consistency management between different business units

Regular updates and retraining as business rules evolve